Custom OpenAI

API reference: https://platform.openai.com/docs/api-reference/chat (opens in a new tab)

ProxyAI works with most OpenAI-compatible cloud providers, including Together.ai, Groq, Anyscale, and others, or you can set up a custom configuration.

Getting Started

Before you begin, make sure you understand the basics of REST API (opens in a new tab) principles.

Chat Completions

In this example, we'll use Groq to power our messages and commands.

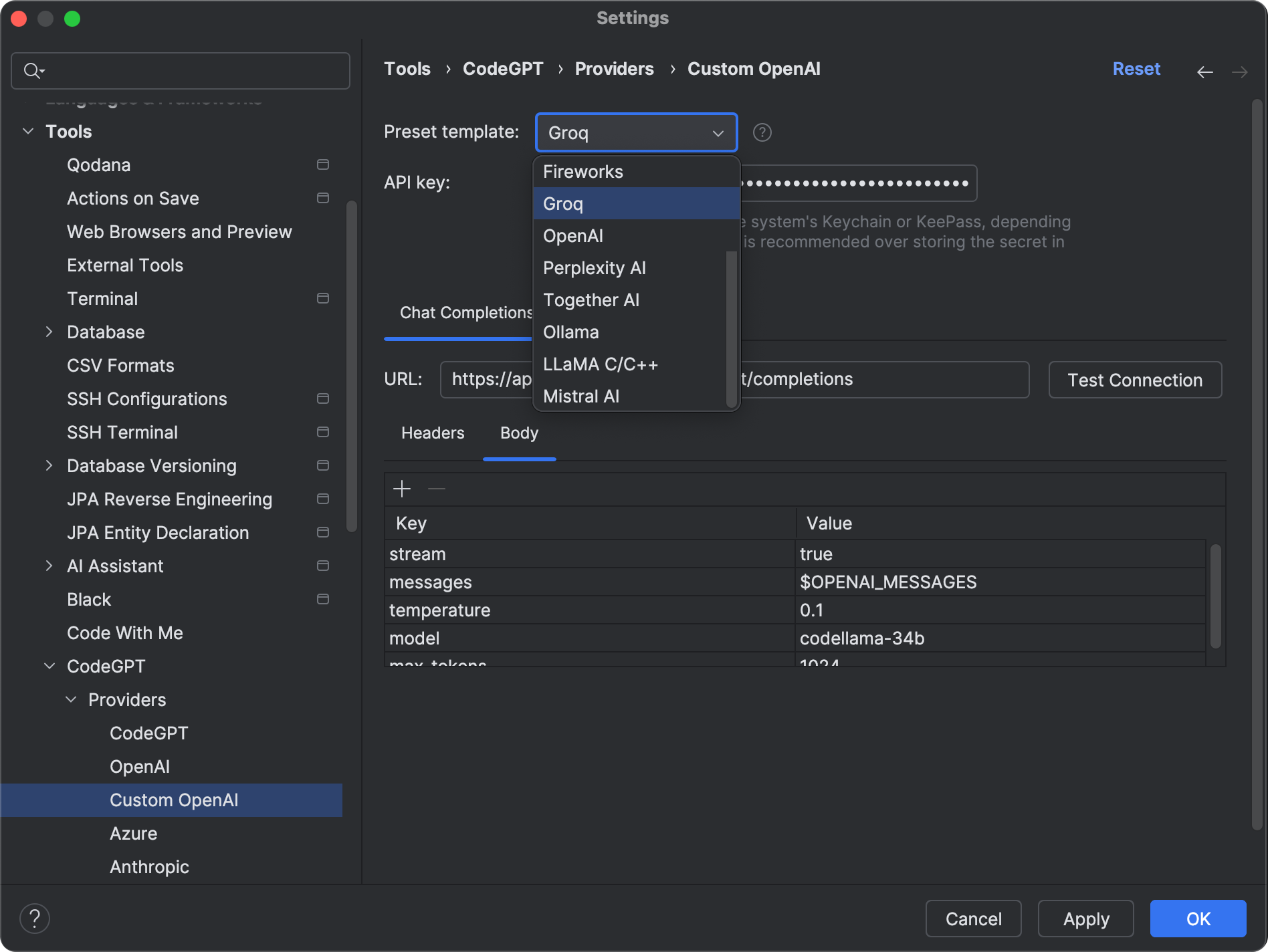

- Navigate to the plugin's settings via File > Settings/Preferences > Tools > ProxyAI > Providers > Custom OpenAI.

- Choose

Groqfrom the Preset template dropdown.

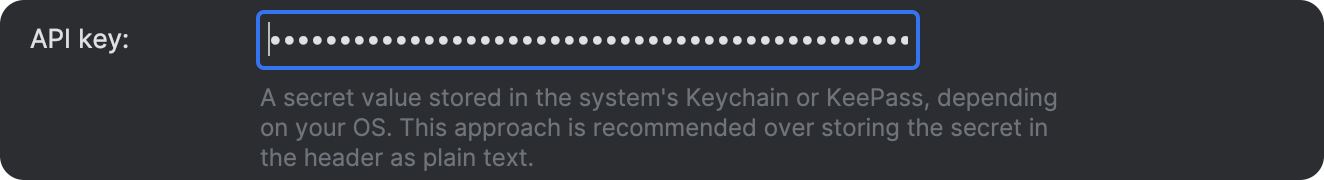

- Obtain your key from Groq's console (opens in a new tab) and paste it into the designated field.

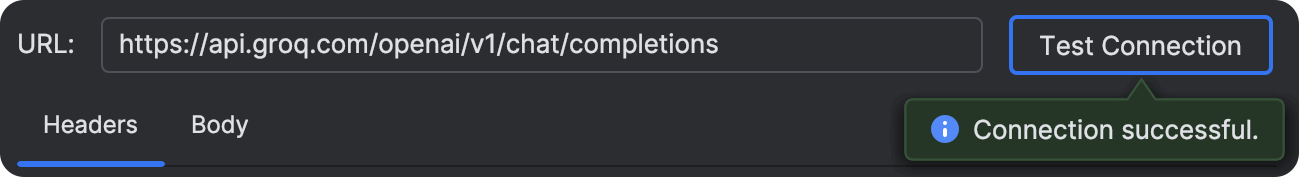

- Verify that everything is configured correctly and that the connection is successful.

- Click

ApplyorOKto save the changes.

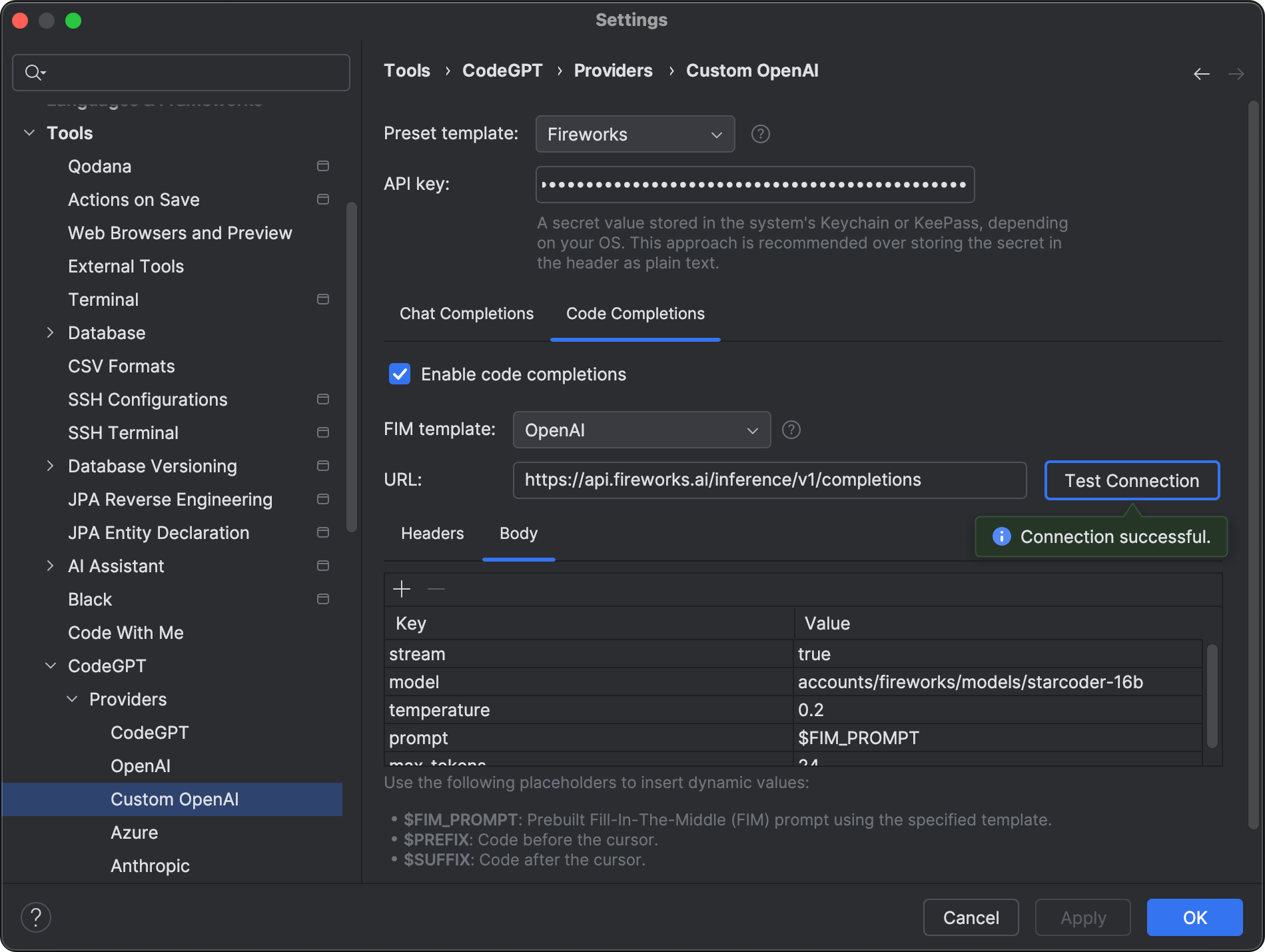

Code Completions

Groq doesn't provide an LLM that supports fill-in-the-middle (FIM) completions, but you can use StarCoder 16B via the Fireworks API. ProxyAI includes a preset template for Fireworks—just get the API key and add it in the settings field.

Advanced Request Configuration

The Headers and Body tabs support structured editing for complex request payloads.

- Add, edit, and remove individual headers and body properties.

- For body properties, choose a value type:

StringPlaceholderNumberBooleanNullObject(JSON object)Array(JSON array)

- Use

Edit JSONin both tabs to edit the entire headers/body payload as raw JSON. - JSON input is validated before saving.

Placeholders

You can use the following placeholders in Custom OpenAI request configs:

$OPENAI_MESSAGES: Replaced with structured OpenAI-format messages (JSON array).$PROMPT: Replaced with concatenated message content.$CUSTOM_SERVICE_API_KEY: Replaced with the API key from your Custom OpenAI settings.

Nested Params Support

Placeholder and API key replacement works recursively, including inside nested objects and arrays in the request body.

This enables payloads like:

{

"model": "my-model",

"payload": {

"prompt_alias": "$PROMPT",

"messages_alias": "$OPENAI_MESSAGES",

"auth": "Bearer $CUSTOM_SERVICE_API_KEY",

"items": [

{

"kind": "prompt",

"value": "$PROMPT"

},

{

"kind": "messages",

"value": "$OPENAI_MESSAGES"

}

]

}

}If ProxyAI sends a non-stream request, any stream field (including nested ones) is automatically normalized to false.